28. Natural Language Processing with Python

By Bernd Klein. Last modified: 19 Feb 2025.

Introduction

We mentioned in the introductory chapter of our tutorial that a spam filter for emails is a typical example of machine learning. Emails are based on text, which is why a classifier to classify emails must be able to process text as input. If we look at the previous examples with neural networks, they always run directly with numerical values and have a fixed input length. In the end, the characters of a text also consist of numerical values, but it is obvious that we cannot simply use a text as it is as input for a neural network. This means that the text have to be converted into a numerical representation, e.g. vectors or arrays of numbers.

We will learn in this tutorial how to encode text in a way which is suitable for machine processing.

Live Python training

See our Python training courses

Bag-of-Words Model

If we want to use texts in machine learning, we need a representation of the text which is usable for Machine Learning purposes. This means we need a numerical representation. We cannot use texts directly.

In natural language processing and information retrievel the bag-of-words model is of crucial importance. The bag-of-words model can be used to represent text data in a way which is suitable for machine learning algorithms. Furthermore, this model is easy and efficient to implement. In the bag-of-words model, a text (such as a sentence or a document) is represented as the so-called bag (a set or multiset) of its words. Grammar and word order are ignored.

We will use in the following a list of three strings to demonstrate the bag-of-words approach. In linguistics, the collection of texts used for the experiments or tests is usually called a corpus:

corpus = ["To be, or not to be, that is the question:",

"Whether 'tis nobler in the mind to suffer",

"The slings and arrows of outrageous fortune,"]

We will use the submodule text from sklearn.feature_extraction. This module contains utilities to build feature vectors from text documents.

from sklearn.feature_extraction import text

CountVectorizer is a class in the module sklearn.feature_extraction.text. It's a class useful for building a corpus vocabulary. In addition, it produces the numerical representation of text that we need, i.e. Numpy vectors.

First we need an instance of this class. When we instantiate a CountVectorizer, we can pass some optional parameters, but it is possible to call it with no arguments, as we will do in the following. Printing the vectorizer gives us useful information about the default values used when the instance was created:

vectorizer = text.CountVectorizer()

print(vectorizer)

OUTPUT:

CountVectorizer()

We have now an instance of CountVectorizer, but it has not seen any texts so far. We will use the method fit to process our previously defined corpus. We learn a vocabulary dictionary of all the tokens (strings) of the corpus:

vectorizer.fit(corpus)

OUTPUT:

CountVectorizer()CountVectorizer()

fit created the vocabulary structure vocabulary_. This contains the words of the text as keys and a unique integer value for each word. As the default value for the parameter lowercase is set to True, the To in the beginning of the text has been turned into to. You may also notice that the vocabulary contains only words without any punctuation or special character. You can change this behaviour by assigning a regular expression to the keyword parameter token_pattern of the fit method. The default is set to (?u)\\b\\w\\w+\\b. The (?u) part of this regular expression is not necessary because it switches on the re.U (re.UNICODE) flag for this expression, which is the default in Python anyway. The minimal word length will be two characters:

print("Vocabulary: ", vectorizer.vocabulary_)

OUTPUT:

Vocabulary: {'to': 18, 'be': 2, 'or': 10, 'not': 8, 'that': 15, 'is': 5, 'the': 16, 'question': 12, 'whether': 19, 'tis': 17, 'nobler': 7, 'in': 4, 'mind': 6, 'suffer': 14, 'slings': 13, 'and': 0, 'arrows': 1, 'of': 9, 'outrageous': 11, 'fortune': 3}

If you only want to see the words without the indices, you can use the method get_feature_names_out:

print(vectorizer.get_feature_names_out())

OUTPUT:

['and' 'arrows' 'be' 'fortune' 'in' 'is' 'mind' 'nobler' 'not' 'of' 'or' 'outrageous' 'question' 'slings' 'suffer' 'that' 'the' 'tis' 'to' 'whether']

Alternatively, you can apply keys to the vocaulary to keep the ordering:

print(list(vectorizer.vocabulary_.keys()))

OUTPUT:

['to', 'be', 'or', 'not', 'that', 'is', 'the', 'question', 'whether', 'tis', 'nobler', 'in', 'mind', 'suffer', 'slings', 'and', 'arrows', 'of', 'outrageous', 'fortune']

With the aid of transform we will extract the token counts out of the raw text documents. The call will use the vocabulary which we created with fit:

token_count_matrix = vectorizer.transform(corpus)

print(token_count_matrix)

OUTPUT:

(0, 2) 2 (0, 5) 1 (0, 8) 1 (0, 10) 1 (0, 12) 1 (0, 15) 1 (0, 16) 1 (0, 18) 2 (1, 4) 1 (1, 6) 1 (1, 7) 1 (1, 14) 1 (1, 16) 1 (1, 17) 1 (1, 18) 1 (1, 19) 1 (2, 0) 1 (2, 1) 1 (2, 3) 1 (2, 9) 1 (2, 11) 1 (2, 13) 1 (2, 16) 1

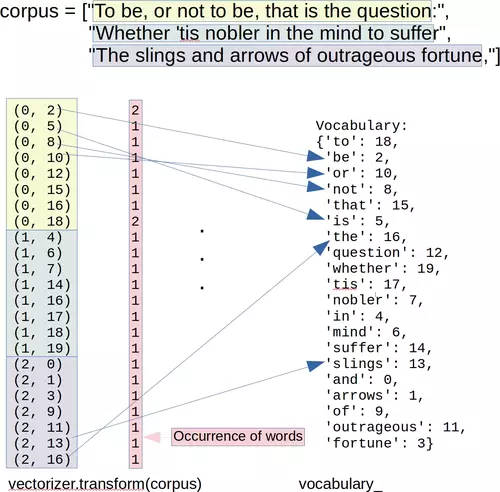

The connection between the corpus, the Vocabulary vocabulary_ and the vector created by transform can be seen in the following image:

We will apply the method toarray on our object token_count_matrix. It will return a dense ndarray representation of this matrix.

Just in case: You might see that people use sometimes todense instead of toarray.

Do not use todense!1

dense_tcm = token_count_matrix.toarray()

dense_tcm

OUTPUT:

array([[0, 0, 2, 0, 0, 1, 0, 0, 1, 0, 1, 0, 1, 0, 0, 1, 1, 0, 2, 0],

[0, 0, 0, 0, 1, 0, 1, 1, 0, 0, 0, 0, 0, 0, 1, 0, 1, 1, 1, 1],

[1, 1, 0, 1, 0, 0, 0, 0, 0, 1, 0, 1, 0, 1, 0, 0, 1, 0, 0, 0]])

The rows of this array correspond to the strings of our corpus. The length of a row corresponds to the length of the vocabulary. The i'th value in a row corresponds to the i'th entry of the list returned by CountVectorizer method get_feature_names. If the value of dense_tcm[i][j] is equal to k, we know the word with the indexj in the vocabulary occurs k times in the string with the index i in the corpus.

This is visualized in the following diagram:

feature_names = vectorizer.get_feature_names_out()

for el in vectorizer.vocabulary_:

print(el, end=(", "))

OUTPUT:

to, be, or, not, that, is, the, question, whether, tis, nobler, in, mind, suffer, slings, and, arrows, of, outrageous, fortune,

import pandas as pd

pd.DataFrame(data=dense_tcm,

index=['corpus_0', 'corpus_1', 'corpus_2'],

columns=vectorizer.get_feature_names_out())

| and | arrows | be | fortune | in | is | mind | nobler | not | of | or | outrageous | question | slings | suffer | that | the | tis | to | whether | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| corpus_0 | 0 | 0 | 2 | 0 | 0 | 1 | 0 | 0 | 1 | 0 | 1 | 0 | 1 | 0 | 0 | 1 | 1 | 0 | 2 | 0 |

| corpus_1 | 0 | 0 | 0 | 0 | 1 | 0 | 1 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 1 | 1 | 1 | 1 |

| corpus_2 | 1 | 1 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 1 | 0 | 1 | 0 | 0 | 1 | 0 | 0 | 0 |

word = "be"

i = 1

j = vectorizer.vocabulary_[word]

print("number of times '" + word + "' occurs in:")

for i in range(len(corpus)):

print(" '" + corpus[i] + "': " + str(dense_tcm[i][j]))

OUTPUT:

number of times 'be' occurs in:

'To be, or not to be, that is the question:': 2

'Whether 'tis nobler in the mind to suffer': 0

'The slings and arrows of outrageous fortune,': 0

We will extract the token counts out of new text documents. Let's use a literally doubtful variation of Hamlet's famous monologue and check what transform has to say about it. transform will use the vocabulary which was previously fitted with fit.

txt = "That is the question and it is nobler in the mind."

vectorizer.transform([txt]).toarray()

OUTPUT:

array([[1, 0, 0, 0, 1, 2, 1, 1, 0, 0, 0, 0, 1, 0, 0, 1, 2, 0, 0, 0]])

print(vectorizer.get_feature_names_out())

OUTPUT:

['and' 'arrows' 'be' 'fortune' 'in' 'is' 'mind' 'nobler' 'not' 'of' 'or' 'outrageous' 'question' 'slings' 'suffer' 'that' 'the' 'tis' 'to' 'whether']

print(vectorizer.vocabulary_)

OUTPUT:

{'to': 18, 'be': 2, 'or': 10, 'not': 8, 'that': 15, 'is': 5, 'the': 16, 'question': 12, 'whether': 19, 'tis': 17, 'nobler': 7, 'in': 4, 'mind': 6, 'suffer': 14, 'slings': 13, 'and': 0, 'arrows': 1, 'of': 9, 'outrageous': 11, 'fortune': 3}

Word Importance

If you look at words like "the", "and" or "of", you will see see that they will occur in nearly all English texts. If you keep in mind that our ultimate goal will be to differentiate between texts and attribute them to classes, words like the previously mentioned ones will bear hardly any meaning. If you look at the following corpus, you can see words like "you", "I" or important words like "Python", "lottery" or "Programmer":

from sklearn.feature_extraction import text

corpus = ["It does not matter what you are doing, just do it!",

"Would you work if you won the lottery?",

"You like Python, he likes Python, we like Python, everybody loves Python!"

"You said: 'I wish I were a Python programmer'",

"You can stay here, if you want to. I would, if I were you."

]

vectorizer = text.CountVectorizer()

vectorizer.fit(corpus)

token_count_matrix = vectorizer.transform(corpus)

print(token_count_matrix)

OUTPUT:

(0, 0) 1 (0, 2) 1 (0, 3) 1 (0, 4) 1 (0, 9) 2 (0, 10) 1 (0, 15) 1 (0, 16) 1 (0, 26) 1 (0, 31) 1 (1, 8) 1 (1, 13) 1 (1, 21) 1 (1, 28) 1 (1, 29) 1 (1, 30) 1 (1, 31) 2 (2, 5) 1 (2, 6) 1 (2, 11) 2 (2, 12) 1 (2, 14) 1 (2, 17) 1 (2, 18) 5 (2, 19) 1 (2, 24) 1 (2, 25) 1 (2, 27) 1 (2, 31) 2 (3, 1) 1 (3, 7) 1 (3, 8) 2 (3, 20) 1 (3, 22) 1 (3, 23) 1 (3, 25) 1 (3, 30) 1 (3, 31) 3

tf_idf = text.TfidfTransformer()

tf_idf.fit(token_count_matrix)

tf_idf.idf_

OUTPUT:

array([1.91629073, 1.91629073, 1.91629073, 1.91629073, 1.91629073,

1.91629073, 1.91629073, 1.91629073, 1.51082562, 1.91629073,

1.91629073, 1.91629073, 1.91629073, 1.91629073, 1.91629073,

1.91629073, 1.91629073, 1.91629073, 1.91629073, 1.91629073,

1.91629073, 1.91629073, 1.91629073, 1.91629073, 1.91629073,

1.51082562, 1.91629073, 1.91629073, 1.91629073, 1.91629073,

1.51082562, 1. ])

tf_idf.idf_[vectorizer.vocabulary_['python']]

OUTPUT:

1.916290731874155

da = vectorizer.transform(corpus).toarray()

i = 0

# check how often the word 'would' occurs in the the i'th sentence:

#vectorizer.vocabulary_['would']

word_ind = vectorizer.vocabulary_['would']

da[i][word_ind]

da[:,word_ind]

OUTPUT:

array([0, 1, 0, 1])

word_weight_list = list(zip(vectorizer.get_feature_names_out(), tf_idf.idf_))

word_weight_list.sort(key=lambda x:x[1]) # sort list by the weights (2nd component)

for word, idf_weight in word_weight_list:

print(f"{word:15s}: {idf_weight:4.3f}")

OUTPUT:

you : 1.000 if : 1.511 were : 1.511 would : 1.511 are : 1.916 can : 1.916 do : 1.916 does : 1.916 doing : 1.916 everybody : 1.916 he : 1.916 here : 1.916 it : 1.916 just : 1.916 like : 1.916 likes : 1.916 lottery : 1.916 loves : 1.916 matter : 1.916 not : 1.916 programmer : 1.916 python : 1.916 said : 1.916 stay : 1.916 the : 1.916 to : 1.916 want : 1.916 we : 1.916 what : 1.916 wish : 1.916 won : 1.916 work : 1.916

from numpy import log

from sklearn.feature_extraction import text

corpus = ["It does not matter what you are doing, just do it!",

"Would you work if you won the lottery?",

"You like Python, he likes Python, we like Python, everybody loves Python!"

"You said: 'I wish I were a Python programmer'",

"You can stay here, if you want to. I would, if I were you."

]

n = len(corpus)

# the following variables are used globally (as free variables) in the functions :-(

vectorizer = text.CountVectorizer()

vectorizer.fit(corpus)

da = vectorizer.transform(corpus).toarray()

Term Frequency

We will first define a function for the term frequency.

Some notations:

- $f_{t, d}$ denotes the number of times that a term t occurs in document d

- ${wc}_d$ denotes the number of words in a document $d$

The simplest choice to define tf(t,d) is to use the raw count of a term in a document, i.e., the number of times that term t occurs in document d, which we can denote as $f_{t, d}$

We can define tf(t, d) in different ways:

- raw count of a term: $tf(t, d) = f_{t, d}$

- term frequency adjusted for document length: $tf(t, d) = \frac{f_{t, d}}{{wc}_d}$

- logarithmically scaled frequency: $tf(t, d) = \log(1 + f_{t, d})$

- augmented frequency, to prevent a bias towards longer documents, e.g. raw frequency of the term divided by the raw frequency of the most occurring term in the document: $tf(t, d) = 0.5 + 0.5 \cdot \frac{f_{t, d}}{\max_{t' \in d}{\{f_{t', d}\}} }$

def tf(t, d, mode="raw"):

""" The Term Frequency 'tf' calculates how often a term 't'

occurs in a document 'd'. ('d': document index)

If t_in_d = Number of times a term t appears in a document d

and no_terms_d = Total number of terms in the document,

tf(t, d) = t_in_d / no_terms_d

"""

if t in vectorizer.vocabulary_:

word_ind = vectorizer.vocabulary_[t]

t_occurences = da[d, word_ind] # 'd' is the document index

else:

t_occurences = 0

if mode == "raw":

result = t_occurences

elif mode == "length":

all_terms = (da[d] > 0).sum() # calculate number of different terms in d

result = t_occurences / all_terms

elif mode == "log":

result = log(1 + t_occurences)

elif mode == "augfreq":

result = 0.5 + 0.5 * t_occurences / da[d].max()

return result

We will check the word frequencies for some words:

print(" raw length log augmented freq")

for term in ['matter', 'python', 'would']:

for docu_index in range(len(corpus)):

d = corpus[docu_index]

print(f"\n'{term}' in '{d}''")

for mode in ['raw', 'length', 'log', 'augfreq']:

x = tf(term, docu_index, mode=mode)

print(f"{x:7.2f}", end="")

OUTPUT:

raw length log augmented freq 'matter' in 'It does not matter what you are doing, just do it!'' 1.00 0.10 0.69 0.75 'matter' in 'Would you work if you won the lottery?'' 0.00 0.00 0.00 0.50 'matter' in 'You like Python, he likes Python, we like Python, everybody loves Python!You said: 'I wish I were a Python programmer''' 0.00 0.00 0.00 0.50 'matter' in 'You can stay here, if you want to. I would, if I were you.'' 0.00 0.00 0.00 0.50 'python' in 'It does not matter what you are doing, just do it!'' 0.00 0.00 0.00 0.50 'python' in 'Would you work if you won the lottery?'' 0.00 0.00 0.00 0.50 'python' in 'You like Python, he likes Python, we like Python, everybody loves Python!You said: 'I wish I were a Python programmer''' 5.00 0.42 1.79 1.00 'python' in 'You can stay here, if you want to. I would, if I were you.'' 0.00 0.00 0.00 0.50 'would' in 'It does not matter what you are doing, just do it!'' 0.00 0.00 0.00 0.50 'would' in 'Would you work if you won the lottery?'' 1.00 0.14 0.69 0.75 'would' in 'You like Python, he likes Python, we like Python, everybody loves Python!You said: 'I wish I were a Python programmer''' 0.00 0.00 0.00 0.50 'would' in 'You can stay here, if you want to. I would, if I were you.'' 1.00 0.11 0.69 0.67

Document Frequency

The document frequency df of a term t is defined as the number of documents in the document set that contain the term t.

$df(t) = |{\{d \in D: t \in d\}}|$

Inverse Document Frequency

The inverse document frequency is a measure of how much information the word provides, i.e., if it's common or rare across all documents. It is the logarithmically scaled inverse fraction of the document frequency. The effect of adding 1 to the idf in the equation above is that terms with zero idf, i.e., terms that occur in all documents in a training set, will not be entirely ignored.

$idf(t) = log( \frac{n}{df(t)} ) + 1$

n is the number of documents in the corpus $n = |D|$

(Note that the idf formula above differs from the standard textbook notation that defines the idf as $idf(t) = log( \frac{n}{df(t) + 1})$.)

The formula above is used, when TfidfTransformer() is called with smooth_idf=False! If it is called with smooth_idf=True (the default) the constant 1 is added to the numerator and denominator of the idf as if an extra document was seen containing every term in the collection exactly once, which prevents zero divisions:

$idf(t) = log( \frac{n + 1}{df(t) + 1} ) + 1$

Term frequency–Inverse document frequency

$tf_idf$ is calculated as the product of $tf(t, d)$ and $idf(t)$:

$tf_idf(t, d) = tf(t, d) \cdot idf(t)$

A high value of tf–idf means that the term has a high "term frequency" in the given document and a low "document frequency" in the other documents of the corpus. This means that this wieght can be used to filter out common terms.

We will program the tf_idf function now:

The helpfile of text.TfidfTransformer explains how tf_idf is calculated:

We will manually program these functions in the following:

def df(t):

""" df(t) is the document frequency of t; the document frequency is

the number of documents in the document set that contain the term t. """

word_ind = vectorizer.vocabulary_[t]

tf_in_docus = da[:, word_ind] # vector with the freqencies of word_ind in all docus

existence_in_docus = tf_in_docus > 0 # binary vector, existence of word in docus

return existence_in_docus.sum()

#df("would", vectorizer)

def idf(t, smooth_idf=True):

""" idf """

if smooth_idf:

return log((1 + n) / (1 + df(t)) ) + 1

else:

return log(n / df(t) ) + 1

def tf_idf(t, d):

return idf(t) * tf(t, d)

res_idf = []

for word in vectorizer.get_feature_names_out():

tf_docus = []

res_idf.append([word, idf(word)])

res_idf.sort(key=lambda x:x[1])

for item in res_idf:

print(item)

OUTPUT:

['you', 1.0] ['if', 1.5108256237659907] ['were', 1.5108256237659907] ['would', 1.5108256237659907] ['are', 1.916290731874155] ['can', 1.916290731874155] ['do', 1.916290731874155] ['does', 1.916290731874155] ['doing', 1.916290731874155] ['everybody', 1.916290731874155] ['he', 1.916290731874155] ['here', 1.916290731874155] ['it', 1.916290731874155] ['just', 1.916290731874155] ['like', 1.916290731874155] ['likes', 1.916290731874155] ['lottery', 1.916290731874155] ['loves', 1.916290731874155] ['matter', 1.916290731874155] ['not', 1.916290731874155] ['programmer', 1.916290731874155] ['python', 1.916290731874155] ['said', 1.916290731874155] ['stay', 1.916290731874155] ['the', 1.916290731874155] ['to', 1.916290731874155] ['want', 1.916290731874155] ['we', 1.916290731874155] ['what', 1.916290731874155] ['wish', 1.916290731874155] ['won', 1.916290731874155] ['work', 1.916290731874155]

corpus

OUTPUT:

['It does not matter what you are doing, just do it!', 'Would you work if you won the lottery?', "You like Python, he likes Python, we like Python, everybody loves Python!You said: 'I wish I were a Python programmer'", 'You can stay here, if you want to. I would, if I were you.']

for word, word_index in vectorizer.vocabulary_.items():

print(f"\n{word:12s}: ", end="")

for d_index in range(len(corpus)):

print(f"{d_index:1d} {tf_idf(word, d_index):3.2f}, ", end="" )

OUTPUT:

it : 0 3.83, 1 0.00, 2 0.00, 3 0.00, does : 0 1.92, 1 0.00, 2 0.00, 3 0.00, not : 0 1.92, 1 0.00, 2 0.00, 3 0.00, matter : 0 1.92, 1 0.00, 2 0.00, 3 0.00, what : 0 1.92, 1 0.00, 2 0.00, 3 0.00, you : 0 1.00, 1 2.00, 2 2.00, 3 3.00, are : 0 1.92, 1 0.00, 2 0.00, 3 0.00, doing : 0 1.92, 1 0.00, 2 0.00, 3 0.00, just : 0 1.92, 1 0.00, 2 0.00, 3 0.00, do : 0 1.92, 1 0.00, 2 0.00, 3 0.00, would : 0 0.00, 1 1.51, 2 0.00, 3 1.51, work : 0 0.00, 1 1.92, 2 0.00, 3 0.00, if : 0 0.00, 1 1.51, 2 0.00, 3 3.02, won : 0 0.00, 1 1.92, 2 0.00, 3 0.00, the : 0 0.00, 1 1.92, 2 0.00, 3 0.00, lottery : 0 0.00, 1 1.92, 2 0.00, 3 0.00, like : 0 0.00, 1 0.00, 2 3.83, 3 0.00, python : 0 0.00, 1 0.00, 2 9.58, 3 0.00, he : 0 0.00, 1 0.00, 2 1.92, 3 0.00, likes : 0 0.00, 1 0.00, 2 1.92, 3 0.00, we : 0 0.00, 1 0.00, 2 1.92, 3 0.00, everybody : 0 0.00, 1 0.00, 2 1.92, 3 0.00, loves : 0 0.00, 1 0.00, 2 1.92, 3 0.00, said : 0 0.00, 1 0.00, 2 1.92, 3 0.00, wish : 0 0.00, 1 0.00, 2 1.92, 3 0.00, were : 0 0.00, 1 0.00, 2 1.51, 3 1.51, programmer : 0 0.00, 1 0.00, 2 1.92, 3 0.00, can : 0 0.00, 1 0.00, 2 0.00, 3 1.92, stay : 0 0.00, 1 0.00, 2 0.00, 3 1.92, here : 0 0.00, 1 0.00, 2 0.00, 3 1.92, want : 0 0.00, 1 0.00, 2 0.00, 3 1.92, to : 0 0.00, 1 0.00, 2 0.00, 3 1.92,

Another Simple Example

We will use another simple example to illustrate the previously introduced concepts. We use a sentence which contains solely different words. The corpus consists of this sentence and reduced versions of it, i.e. cutting of words from the end of the sentence.

from sklearn.feature_extraction import text

words = "Cold wind blows over the cornfields".split()

corpus = []

for i in range(1, len(words)+1):

corpus.append(" ".join(words[:i]))

print(corpus)

OUTPUT:

['Cold', 'Cold wind', 'Cold wind blows', 'Cold wind blows over', 'Cold wind blows over the', 'Cold wind blows over the cornfields']

vectorizer = text.CountVectorizer()

vectorizer = vectorizer.fit(corpus)

vectorized_text = vectorizer.transform(corpus)

tf_idf = text.TfidfTransformer()

tf_idf.fit(vectorized_text)

tf_idf.idf_

OUTPUT:

array([1.33647224, 1. , 2.25276297, 1.55961579, 1.84729786,

1.15415068])

word_weight_list = list(zip(vectorizer.get_feature_names_out(), tf_idf.idf_))

word_weight_list.sort(key=lambda x:x[1]) # sort list by the weights (2nd component)

for word, idf_weight in word_weight_list:

print(f"{word:15s}: {idf_weight:4.3f}")

OUTPUT:

cold : 1.000 wind : 1.154 blows : 1.336 over : 1.560 the : 1.847 cornfields : 2.253

TfidF = text.TfidfTransformer(smooth_idf=True, use_idf=True)

tfidf = TfidF.fit_transform(vectorized_text)

word_weight_list = list(zip(vectorizer.get_feature_names_out(), tf_idf.idf_))

word_weight_list.sort(key=lambda x:x[1]) # sort list by the weights (2nd component)

for word, idf_weight in word_weight_list:

print(f"{word:15s}: {idf_weight:4.3f}")

OUTPUT:

cold : 1.000 wind : 1.154 blows : 1.336 over : 1.560 the : 1.847 cornfields : 2.253

Working With Real Data

scikit-learn contains a dataset from real newsgroups, which can be used for our purposes:

from sklearn.datasets import fetch_20newsgroups

from sklearn.feature_extraction.text import CountVectorizer

import numpy as np

# Create our vectorizer

vectorizer = CountVectorizer()

# Let's fetch all the possible text data

newsgroups_data = fetch_20newsgroups()

Let us have a closer look at this data. As with all the other data sets in sklearn we can find the actual data under the attribute data:

print(newsgroups_data.data[0])

OUTPUT:

From: [email protected] (where's my thing) Subject: WHAT car is this!? Nntp-Posting-Host: rac3.wam.umd.edu Organization: University of Maryland, College Park Lines: 15 I was wondering if anyone out there could enlighten me on this car I saw the other day. It was a 2-door sports car, looked to be from the late 60s/ early 70s. It was called a Bricklin. The doors were really small. In addition, the front bumper was separate from the rest of the body. This is all I know. If anyone can tellme a model name, engine specs, years of production, where this car is made, history, or whatever info you have on this funky looking car, please e-mail. Thanks, - IL ---- brought to you by your neighborhood Lerxst ----

print(newsgroups_data.data[200])

OUTPUT:

Subject: Re: "Proper gun control?" What is proper gun cont From: [email protected] (John Kim) Organization: Harvard University Science Center Nntp-Posting-Host: scws8.harvard.edu Lines: 17 In article <[email protected]> [email protected] (Kirk Hays) writes: >I'd like to point out that I was in error - "Terminator" began posting only >six months before he purchased his first firearm, according to private email >from him. >I can't produce an archived posting of his earlier than January 1992, >and he purchased his first firearm in March 1992. >I guess it only seemed like years. >Kirk Hays - NRA Life, seventh generation. I first read and consulted rec.guns in the summer of 1991. I just purchased my first firearm in early March of this year. NOt for lack of desire for a firearm, you understand. I could have purchased a rifle or shotgun but didn't want one. -Case Kim

We create the vectorizer:

vectorizer.fit(newsgroups_data.data)

OUTPUT:

CountVectorizer()CountVectorizer()

Let's have a look at the first n words:

counter = 0

n = 10

for word, index in vectorizer.vocabulary_.items():

print(word, index)

counter += 1

if counter > n:

break

OUTPUT:

from 56979 lerxst 75358 wam 123162 umd 118280 edu 50527 where 124031 my 85354 thing 114688 subject 111322 what 123984 car 37780

We can turn the newsgroup postings into arrays. We do it with the first one:

a = vectorizer.transform([newsgroups_data.data[0]]).toarray()[0]

print(a)

OUTPUT:

[0 0 0 ... 0 0 0]

The vocabulary is huge This is why we see mostly zeros.

len(vectorizer.vocabulary_)

OUTPUT:

130107

There are a lot of 'rubbish' words in this vocabulary. rubish means seen from the perspective of machine learning. For machine learning purposes words like 'Subject', 'From', 'Organization', 'Nntp-Posting-Host', 'Lines' and many others are useless, because they occur in all or in most postings. The technical 'garbage' from the newsgroup can be easily stripped off. We can fetch it differently. Stating that we do not want 'headers', 'footers' and 'quotes':

newsgroups_data_cleaned = fetch_20newsgroups(remove=('headers', 'footers', 'quotes'))

print(newsgroups_data_cleaned.data[0])

OUTPUT:

I was wondering if anyone out there could enlighten me on this car I saw the other day. It was a 2-door sports car, looked to be from the late 60s/ early 70s. It was called a Bricklin. The doors were really small. In addition, the front bumper was separate from the rest of the body. This is all I know. If anyone can tellme a model name, engine specs, years of production, where this car is made, history, or whatever info you have on this funky looking car, please e-mail.

Let's have a look at the complete posting:

print(newsgroups_data.data[0])

OUTPUT:

From: [email protected] (where's my thing) Subject: WHAT car is this!? Nntp-Posting-Host: rac3.wam.umd.edu Organization: University of Maryland, College Park Lines: 15 I was wondering if anyone out there could enlighten me on this car I saw the other day. It was a 2-door sports car, looked to be from the late 60s/ early 70s. It was called a Bricklin. The doors were really small. In addition, the front bumper was separate from the rest of the body. This is all I know. If anyone can tellme a model name, engine specs, years of production, where this car is made, history, or whatever info you have on this funky looking car, please e-mail. Thanks, - IL ---- brought to you by your neighborhood Lerxst ----

vectorizer_cleaned = vectorizer.fit(newsgroups_data_cleaned.data)

len(vectorizer_cleaned.vocabulary_)

OUTPUT:

101631

So, we got rid of more than 30000 words, but with more than a 100000 words is it still very large.

We can also directly separate the newsgroup feeds into a train and test set:

newsgroups_train = fetch_20newsgroups(subset='train',

remove=('headers', 'footers', 'quotes'))

newsgroups_test = fetch_20newsgroups(subset='test',

remove=('headers', 'footers', 'quotes'))

from sklearn.datasets import fetch_20newsgroups

from sklearn.feature_extraction.text import CountVectorizer

from sklearn.naive_bayes import MultinomialNB

from sklearn import metrics

vectorizer = CountVectorizer()

train_data = vectorizer.fit_transform(newsgroups_train.data)

# creating a classifier

classifier = MultinomialNB(alpha=.01)

classifier.fit(train_data, newsgroups_train.target)

test_data = vectorizer.transform(newsgroups_test.data)

predictions = classifier.predict(test_data)

accuracy_score = metrics.accuracy_score(newsgroups_test.target,

predictions)

f1_score = metrics.f1_score(newsgroups_test.target,

predictions,

average='macro')

print("Accuracy score: ", accuracy_score)

print("F1 score: ", f1_score)

OUTPUT:

Accuracy score: 0.6460435475305364 F1 score: 0.6203806145034193

Stop Words

So far we added all the words to the vocabulary. However, it is questionable whether words like "the", "am", "were" or similar words should be included at all, since they usually do not provide any significant semantic contribution for a text. In other words: They have limited predictive power. It would therefore make sense to exclude such words from editing, i.e. inclusion in the dictionary. This means we have to provide a list of words which should be neglected, i.e. being filtered out before or after processing text. In natural text recognition such words are usually called "stop words". There is no single universal list of stop words defined, which could be used by all natural language processing tools. Usually, stop words consist of the most frequently used words in a language. "Stop words" can be individually chosen for a given task.

By the way, stop words are an idea which is quite old. It goes back to 1959 and Hans Peter Luhn, one of the pioneers in information retrieval.

There are different ways to provide stop words in sklearn:

- Explicit list of stop words

- Automatically created stop words

We will start with individual stop words:

Indivudual Stop Words

from sklearn.feature_extraction.text import CountVectorizer

corpus = ["A horse, a horse, my kingdom for a horse!",

"Horse sense is the thing a horse has which keeps it from betting on people."

"I’ve often said there is nothing better for the inside of the man, than the outside of the horse.",

"A man on a horse is spiritually, as well as physically, bigger then a man on foot.",

"No heaven can heaven be, if my horse isn’t there to welcome me."]

cv = CountVectorizer(stop_words=["my", "for","the", "has", "than", "if",

"from", "on", "of", "it", "there", "ve",

"as", "no", "be", "which", "isn", "to",

"me", "is", "can", "then"])

count_vector = cv.fit_transform(corpus)

count_vector.shape

cv.vocabulary_

OUTPUT:

{'horse': 5,

'kingdom': 8,

'sense': 16,

'thing': 18,

'keeps': 7,

'betting': 1,

'people': 13,

'often': 11,

'said': 15,

'nothing': 10,

'better': 0,

'inside': 6,

'man': 9,

'outside': 12,

'spiritually': 17,

'well': 20,

'physically': 14,

'bigger': 2,

'foot': 3,

'heaven': 4,

'welcome': 19}

sklearn contains default stop words, which are implemented as a frozenset and it can be accessed with text.ENGLISH_STOP_WORDS:

from sklearn.feature_extraction import text

n = 25

print(str(n) + " arbitrary words from ENGLISH_STOP_WORDS:")

counter = 0

for word in text.ENGLISH_STOP_WORDS:

if counter == n - 1:

print(word)

break

print(word, end=", ")

counter += 1

OUTPUT:

25 arbitrary words from ENGLISH_STOP_WORDS: afterwards, elsewhere, also, rather, mostly, bill, alone, any, every, myself, keep, none, five, upon, very, de, forty, something, thereafter, anyway, nobody, besides, not, could, much

We can use stop words in our 20newsgroups classification problem:

from sklearn.datasets import fetch_20newsgroups

from sklearn.feature_extraction.text import CountVectorizer

from sklearn.naive_bayes import MultinomialNB

from sklearn import metrics

vectorizer = CountVectorizer(stop_words=list(text.ENGLISH_STOP_WORDS))

vectors = vectorizer.fit_transform(newsgroups_train.data)

# creating a classifier

classifier = MultinomialNB(alpha=.01)

classifier.fit(vectors, newsgroups_train.target)

vectors_test = vectorizer.transform(newsgroups_test.data)

predictions = classifier.predict(vectors_test)

accuracy_score = metrics.accuracy_score(newsgroups_test.target,

predictions)

f1_score = metrics.f1_score(newsgroups_test.target,

predictions,

average='macro')

print("accuracy score: ", accuracy_score)

print("F1-score: ", f1_score)

OUTPUT:

accuracy score: 0.6526818906001062 F1-score: 0.6268816896587931

Automatically Created Stop Words

As in many other cases, it is a good idea to look for ways to automatically define a list of stop words. A list that is or should be ideally adapted to the problem.

To automatically create a stop word list, we will start with the parameter min_df of CountVectorizer. When you set this threshold parameter, terms that have a document frequency strictly lower than the given threshold will be ignored. This value is also called cut-off in the literature. If a float value in the range of [0.0, 1.0] is used, the parameter represents a proportion of documents. An integer will be treated as absolute counts. This parameter is ignored if vocabulary is not None.

corpus = ["""People say you cannot live without love,

but I think oxygen is more important""",

"Sometimes, when you close your eyes, you cannot see."

"A horse, a horse, my kingdom for a horse!",

"""Horse sense is the thing a horse has which

keeps it from betting on people."""

"""I’ve often said there is nothing better for

the inside of the man, than the outside of the horse.""",

"""A man on a horse is spiritually, as well as physically,

bigger then a man on foot.""",

"""No heaven can heaven be, if my horse isn’t there

to welcome me."""]

cv = CountVectorizer(min_df=2)

count_vector = cv.fit_transform(corpus)

cv.vocabulary_

OUTPUT:

{'people': 7,

'you': 9,

'cannot': 0,

'is': 3,

'horse': 2,

'my': 5,

'for': 1,

'on': 6,

'there': 8,

'man': 4}

Hardly any words from our corpus text are left. Because we have only few documents (strings) in our corpus and also because these texts are very short, the number of words which occur in less then two documents is very high. We eliminated all the words which occur in less two documents.

We can also see the words which have been chosen as stopwords by looking at cv.stop_words_:

cv.stop_words_

OUTPUT:

{'as',

'be',

'better',

'betting',

'bigger',

'but',

'can',

'close',

'eyes',

'foot',

'from',

'has',

'heaven',

'if',

'important',

'inside',

'isn',

'it',

'keeps',

'kingdom',

'live',

'love',

'me',

'more',

'no',

'nothing',

'of',

'often',

'outside',

'oxygen',

'physically',

'said',

'say',

'see',

'sense',

'sometimes',

'spiritually',

'than',

'the',

'then',

'thing',

'think',

'to',

've',

'welcome',

'well',

'when',

'which',

'without',

'your'}

print("number of docus, size of vocabulary, stop_words list size")

for i in range(1, len(corpus)):

cv = CountVectorizer(min_df=i)

count_vector = cv.fit_transform(corpus)

len_voc = len(cv.vocabulary_)

len_stop_words = len(cv.stop_words_)

print(f"{i:10d} {len_voc:15d} {len_stop_words:19d}")

OUTPUT:

number of docus, size of vocabulary, stop_words list size

1 60 0

2 10 50

3 2 58

4 1 59

Another parameter of CountVectorizer with which we can create a corpus-specific stop_words_list is max_df. It can be a float values between 0.0 and 1.0 or an integer. the default value is 1.0, i.e. the float value 1.0 and not an integer 1! When building the vocabulary all terms that have a document frequency strictly higher than the given threshold will be ignored. If this parameter is given as a float betwenn 0.0 and 1.0., the parameter represents a proportion of documents. This parameter is ignored if vocabulary is not None.

Let us use again our previous corpus for an example.

cv = CountVectorizer(max_df=0.20)

count_vector = cv.fit_transform(corpus)

cv.stop_words_

OUTPUT:

{'cannot', 'for', 'horse', 'is', 'man', 'my', 'on', 'people', 'there', 'you'}

Live Python training

See our Python training courses

Exercises

Exercise 1

In the subdirectory 'books' you will find some books:

- Virginia Woolf: Night and Day

- Samuel Butler: The Way of all Flesh

- Herman Melville: Moby Dick

- David Herbert Lawrence: Sons and Lovers

- Daniel Defoe: The Life and Adventures of Robinson Crusoe

- James Joyce: Ulysses

Use these novels as the corpus and create a word count vector.

Exercise 2

Turn the previously calculated 'word count vector' into a dense ndarray representation.

Exercise 3

Let us have another example with a different corpus. The five strings are famous quotes from

- William Shakespeare

- W.C. Fields

- Ronald Reagan

- John Steinbeck

- Author unknown

Compute the IDF values!

quotes = ["A horse, a horse, my kingdom for a horse!",

"Horse sense is the thing a horse has which keeps it from betting on people."

"I’ve often said there is nothing better for the inside of the man, than the outside of the horse.",

"A man on a horse is spiritually, as well as physically, bigger then a man on foot.",

"No heaven can heaven be, if my horse isn’t there to welcome me."]

Solutions

Solution to Exercise 1

corpus = []

books = ["night_and_day_virginia_woolf.txt",

"the_way_of_all_flash_butler.txt",

"moby_dick_melville.txt",

"sons_and_lovers_lawrence.txt",

"robinson_crusoe_defoe.txt",

"james_joyce_ulysses.txt"]

path = "books"

corpus = []

for book in books:

txt = open(path + "/" + book).read()

corpus.append(txt)

[book[:30] for book in corpus]

OUTPUT:

['The Project Gutenberg EBook of', 'The Project Gutenberg eBook, T', '\nThe Project Gutenberg EBook o', 'The Project Gutenberg EBook of', 'The Project Gutenberg eBook, T', '\nThe Project Gutenberg EBook o']

We have to get rid of the Gutenberg header and footer, because it doesn't belong to the novels. We can see by looking at the texts that the authors works begins after lines of the following kind

***START OF THIS PROJECT GUTENBERG ... ***

The footer of the texts start with this line:

***END OF THIS PROJECT GUTENBERG EBOOK ...***

There may or may not be a space after the first three stars or instead of "the" there may be "this".

We can use regular expressions to find the starting point of the novels:

from sklearn.feature_extraction import text

import re

corpus = []

books = ["night_and_day_virginia_woolf.txt",

"the_way_of_all_flash_butler.txt",

"moby_dick_melville.txt",

"sons_and_lovers_lawrence.txt",

"robinson_crusoe_defoe.txt",

"james_joyce_ulysses.txt"]

path = "books"

corpus = []

for book in books:

txt = open(path + "/" + book).read()

text_begin = re.search(r"\*\*\* ?START OF (THE|THIS) PROJECT.*?\*\*\*", txt, re.DOTALL)

text_end = re.search(r"\*\*\* ?END OF (THE|THIS) PROJECT.*?\*\*\*", txt, re.DOTALL)

corpus.append(txt[text_begin.end():text_end.start()])

vectorizer = text.CountVectorizer()

vectorizer.fit(corpus)

token_count_matrix = vectorizer.transform(corpus)

print(token_count_matrix)

OUTPUT:

(0, 4) 2 (0, 35) 1 (0, 60) 1 (0, 79) 1 (0, 131) 1 (0, 221) 1 (0, 724) 6 (0, 731) 5 (0, 734) 1 (0, 743) 5 (0, 761) 1 (0, 773) 1 (0, 779) 1 (0, 780) 1 (0, 781) 23 (0, 790) 1 (0, 804) 1 (0, 809) 412 (0, 810) 36 (0, 817) 2 (0, 823) 4 (0, 824) 19 (0, 825) 3 (0, 828) 11 (0, 829) 1 : : (5, 42156) 5 (5, 42157) 1 (5, 42158) 1 (5, 42159) 2 (5, 42160) 2 (5, 42161) 106 (5, 42165) 1 (5, 42166) 2 (5, 42167) 1 (5, 42172) 2 (5, 42173) 4 (5, 42174) 1 (5, 42175) 1 (5, 42176) 1 (5, 42177) 1 (5, 42178) 3 (5, 42181) 1 (5, 42182) 1 (5, 42183) 3 (5, 42184) 1 (5, 42185) 2 (5, 42186) 1 (5, 42187) 1 (5, 42188) 2 (5, 42189) 1

print("Number of words in vocabulary: ", len(vectorizer.vocabulary_))

OUTPUT:

Number of words in vocabulary: 42192

Solution to Exercise 2

All you have to do is applying the method toarray to get the token_count_matrix:

token_count_matrix.toarray()

OUTPUT:

array([[ 0, 0, 0, ..., 0, 0, 0],

[19, 0, 0, ..., 0, 0, 0],

[20, 0, 0, ..., 0, 1, 1],

[ 0, 0, 1, ..., 0, 0, 0],

[ 0, 0, 0, ..., 0, 0, 0],

[11, 1, 0, ..., 1, 0, 0]])

Solution to Exercise 3

from sklearn.feature_extraction import text

# our corpus:

quotes = ["A horse, a horse, my kingdom for a horse!",

"Horse sense is the thing a horse has which keeps it from betting on people."

"I’ve often said there is nothing better for the inside of the man, than the outside of the horse.",

"A man on a horse is spiritually, as well as physically, bigger then a man on foot.",

"No heaven can heaven be, if my horse isn’t there to welcome me."]

vectorizer = text.CountVectorizer()

vectorizer.fit(quotes)

vectorized_text = vectorizer.fit_transform(quotes)

tfidf_transformer = text.TfidfTransformer(smooth_idf=True,use_idf=True)

tfidf_transformer.fit(vectorized_text)

"""

alternative way to output the data:

import pandas as pd

df_idf = pd.DataFrame(tfidf_transformer.idf_,

index=vectorizer.get_feature_names(),

columns=["idf_weight"])

df_idf.sort_values(by=['idf_weights']) # sorting data

print(df_idf)

"""

print(f"{'word':15s}: idf_weight")

word_weight_list = list(zip(vectorizer.get_feature_names_out(), tfidf_transformer.idf_))

word_weight_list.sort(key=lambda x:x[1]) # sort list by the weights (2nd component)

for word, idf_weight in word_weight_list:

print(f"{word:15s}: {idf_weight:4.3f}")

OUTPUT:

word : idf_weight horse : 1.000 for : 1.511 is : 1.511 man : 1.511 my : 1.511 on : 1.511 there : 1.511 as : 1.916 be : 1.916 better : 1.916 betting : 1.916 bigger : 1.916 can : 1.916 foot : 1.916 from : 1.916 has : 1.916 heaven : 1.916 if : 1.916 inside : 1.916 isn : 1.916 it : 1.916 keeps : 1.916 kingdom : 1.916 me : 1.916 no : 1.916 nothing : 1.916 of : 1.916 often : 1.916 outside : 1.916 people : 1.916 physically : 1.916 said : 1.916 sense : 1.916 spiritually : 1.916 than : 1.916 the : 1.916 then : 1.916 thing : 1.916 to : 1.916 ve : 1.916 welcome : 1.916 well : 1.916 which : 1.916

Live Python training

See our Python training courses

Exercises

Exercise 1

Perform sentiment analysis on IMDb movie reviews using TF-IDF features and a machine learning classifier to predict whether a review expresses positive or negative sentiment.

Solutions

Exercise 1

import pandas as pd

from sklearn.feature_extraction.text import TfidfVectorizer

from sklearn.ensemble import RandomForestClassifier

from sklearn.pipeline import Pipeline

from sklearn.metrics import classification_report

from sklearn.model_selection import train_test_split

# Load the training and testing data from CSV files

train_data = pd.read_csv("../data/IMDB/Train.csv")

test_data = pd.read_csv("../data/IMDB/Test.csv")

# Split the data into features (reviews) and labels (sentiments)

X_train, y_train = train_data['text'], train_data['label']

X_test, y_test = test_data['text'], test_data['label']

# Initialize TfidfVectorizer

vectorizer = TfidfVectorizer(max_features=5000, stop_words='english')

# Initialize Random Forest classifier

classifier = RandomForestClassifier(n_estimators=100, random_state=42)

# Create a pipeline with TfidfVectorizer and Random Forest

pipeline = Pipeline([

('vectorizer', vectorizer),

('classifier', classifier)

])

# Train the pipeline

pipeline.fit(X_train, y_train)

# Predict on test data

predicted = pipeline.predict(X_test)

# Print classification report

print(classification_report(y_test, predicted))

OUTPUT:

precision recall f1-score support

0 0.85 0.85 0.85 2495

1 0.85 0.85 0.85 2505

accuracy 0.85 5000

macro avg 0.85 0.85 0.85 5000

weighted avg 0.85 0.85 0.85 5000

Live Python training

See our Python training courses

Footnotes

1Logically toarray and todense are the same thing, but toarray returns an ndarray whereas todense returns a matrix. If you consider, what the official Numpy documentation has to say about the numpy.matrix class, you shouldn't use todense! "It is no longer recommended to use this class, even for linear algebra. Instead use regular arrays. The class may be removed in the future." (numpy.matrix) (back)